The new Blob is even scarier than the old one

With enemies like this, Skynet doesn't need friends

As the fifth anniversary of my seminal contribution to blobology approaches, there will naturally be widespread clamoring for me to take stock—to deliver a report on the state of the Blob (defined, loosely speaking, as the foreign policy establishment). As I prepare this anniversary opus, I thought I’d give NZN readers a preview—a report on the state of my state of the Blob report.

I’m afraid the news isn’t good.

I’m not referring to the obviously bad news: that one of the key takeaways from Blob studies—about the manifest idiocy of launching regime change wars, especially when the regime poses no imminent threat to any nation and no long-term threat to America—somehow failed to penetrate the brain of the current occupant of the White House, even though as a candidate he explicitly assured us that it had.

No, I bring even worse news than that. Namely: As the age of artificial intelligence dawns, the various people who make a living as Blobsters—the think tankers, the past and present government officials, the military-industrial-complex titans, the influential media figures—show few signs of getting the picture. Whereas the atomic age spawned lots of creative and even enlightened thinking about its revolutionary implications for national and international security, the age of AI seems so far to be having roughly the opposite effect.

In recent days, two paradigmatic examples of this problem have come to my attention. One involves the China Talk podcast, which for better or worse (plot spoiler: worse) has attained some prominence within the Blob, and indeed recently featured two important former government officials sharing their thoughts on artificial intelligence. The other example involves Palantir, the Silicon Valley defense contractor known for managing the AI-infused software that processed the list of opening-day targets for the Iran war—a list that seems to have included an elementary school whose student body is now largely dead.

I always encourage people who don’t know much about Palantir and are curious about it to start with this short video of Palantir CEO Alex Karp speaking at a public event a bit more than a year ago:

Why, when I was a boy, the men who ran the big defense contractors were mild mannered and evinced a sense of decorum—maybe because they perceived that coming off as belligerent and semi-deranged would be bad for their brand. I’d like to think that they understood something Karp doesn’t, but I fear that, actually, we’re now living in an age when coming off as belligerent and semi-deranged can be good for your brand.

I have additional thoughts about Palantir, but they’ll be easier to process if I first spend a little time on China Talk. This podcast’s more dovish detractors sometimes call it China Hawk, and indeed, its host, Jordan Schneider, fits that description. I interviewed Schneider on the NonZero podcast some months ago and tried to challenge his China hawkism. Judging by his recent discussions of AI, my intervention was less than transformative.

Consider, for example, his take on the implications of Mythos, the new but as yet unreleased Anthropic large language model that, as I’ve noted before, has an unprecedented ability to find and exploit software vulnerabilities. Some people think Mythos foreshadows future AI threats to international stability that are best addressed through international cooperation. Nvidia CEO Jensen Huang recently suggested as much, a suggestion that triggered this response from Schneider on his podcast:

[Jensen] said, “Look, this is something that the US and China need to solve with dialogue.” I am sorry—No one is going to solve the fact that states want to hack other states and other state systems and read their secrets, and, like, maybe make things blow up, through dialogue. I mean this is not something like a global pandemic where it sort of threatens everyone.

Actually, this is like a global pandemic. Powerful and autonomous AI hackers, if not kept under control, could be very bad for the US and China—and lots of other nations. For example: (1) These AIs could fall into the hands of a non-state actor, and the damage done by self-replicating autonomous hackers could cross borders, maybe resulting in the destruction of big chunks of the planet’s communications or energy or financial infrastructures; (2) One of the two AI superpowers, mindful of the growing cyberpower of the other, could misread some cyber-snafu as the opening wave of a cyberattack and then counterattack in fearful desperation, starting a conflict that was catastrophic for both.

In both scenarios—and others I could dream up—the key thing is that a lose-lose outcome is possible, so the game is non-zero-sum. In other words: It’s like a pandemic—and like various other phenomena that should in theory lead nations to cooperate out of self-interest.

This isn’t rocket science! It’s game theory 101—and back when the foundations of nuclear strategy were being laid, awareness of these game theoretical dynamics pervaded the discourse. Maybe that explains why the calls for US-China cooperation on the issues raised by Mythos have come mainly from old timers—like New York Times columnist Tom Friedman and the venerable Economist magazine and, well, me.

The rising generation of Blobsters, in contrast, seems curiously uninterested in even discussing, much less pursuing, such possibilities. A good illustration is a different recent episode of China Talk, featuring two Blobsters of some stature: Ben Buchanan, special advisor for AI in the Biden administration, and Michael Sulmeyer, assistant secretary of defense for cyber policy in the same administration. Though the entire conversation was about the implications of Mythos, neither mentioned the possibility of any kind of international agreement on AI cyberweapons—no treaty, no protocol, no memorandum of understanding, no nothing.

There was brief discussion of international “norms” that might constrain certain uses of AI. Buchanan suggested that, once AI gets better at helping people make bioweapons, then “maybe the norms save us at that point” but “I kind of doubt it, to be honest.” Sulmeyer sounded equally fatalistic. “Have we already missed that moment in AI? I’m not saying that a normative regime, you know, that we should be spending a ton of effort on that or not, just that if you’re asking about norms, that would be, I guess, my question: Is it already a little too late?”

But there’s another path that Buchanan and Sulmeyer are sure it’s not too late to pursue. “Near and dear to my heart is building an American lead in AI,” said Buchanan—and the conversation about Mythos had “reaffirmed the importance of doing that.” Sulmeyer echoed the importance of “the leaders of America’s warfighters” understanding how important it is that “our offensive cyberoperators maintain and extend a competitive advantage.”

Meanwhile, Chris McGuire, their colleague in the Biden administration and now a senior fellow at the Council on Foreign Relations, wrote in the Financial Times: “The consequences of losing the AI arms race are no longer theoretical. AI models are now the decisive offensive and defensive tools in cyber space, and American and allied cyber security depends on maximizing the US lead over China in AI.” McGuire advocated tightening already tough restrictions on the export of chips and chipmaking equipment to China.

So what could the success of McGuire’s plan look like? Here’s a possibility you won’t hear from him: If America’s estimated seven-month lead in the AI race persists or even grows, and AI approaches levels of power that could confer utter dominance on the nation in the lead, the incentive of China to stage a pre-emptive strike would grow (as noted in a much discussed paper called “Superintelligence Strategy,” co-authored by Dan Hendryks, with whom I discussed it on the NonZero podcast). For example, China might attack Taiwan, the location of the factories that produce all those advanced AI chips that, thanks to US export controls, flow to the West but not to China.

It strikes some people as almost unbearably ironic to say that America’s national security could be served by restraint in the development of powerful weapons. But life is full of irony! And there was a time when influential people in the national security establishment understood that. More than half a century ago, some of them realized that, though “missile defense” sounds like a security blanket, it could be the opposite: If nuclear powers started developing missile defenses on a large scale, that could increase the chances of nuclear war by undermining the logic of mutually assured destruction. So Richard Nixon—no hippie peacenik—signed the Anti-Ballistic Missile Treaty; he chose to keep America’s nuclear missiles vulnerable because, so long as the Soviet Union verifiably reciprocated, that would keep both nations safer.

I grant that maintaining global stability in the age of AI is much more challenging than it was in Nixon’s day. To take just one example: A key to nuclear deterrence is that you’d know where incoming missiles were fired from, so the attacker could expect retaliation—and, as many analysts have noted, cyberattacks, in contrast, don’t generally have a clear return address. So, in the age heralded by Mythos, you can’t count on deterrence to keep the peace.

But don’t bother Alex Karp with details like that. Palantir this week posted a long tweet that was a bullet point summary of the bestselling book The Technological Republic, which Karp co-authored. Bullet Point number 12: “One age of deterrence, the atomic age, is ending, and a new era of deterrence built on AI is set to begin.”

It will definitely be a new era—we agree on that.

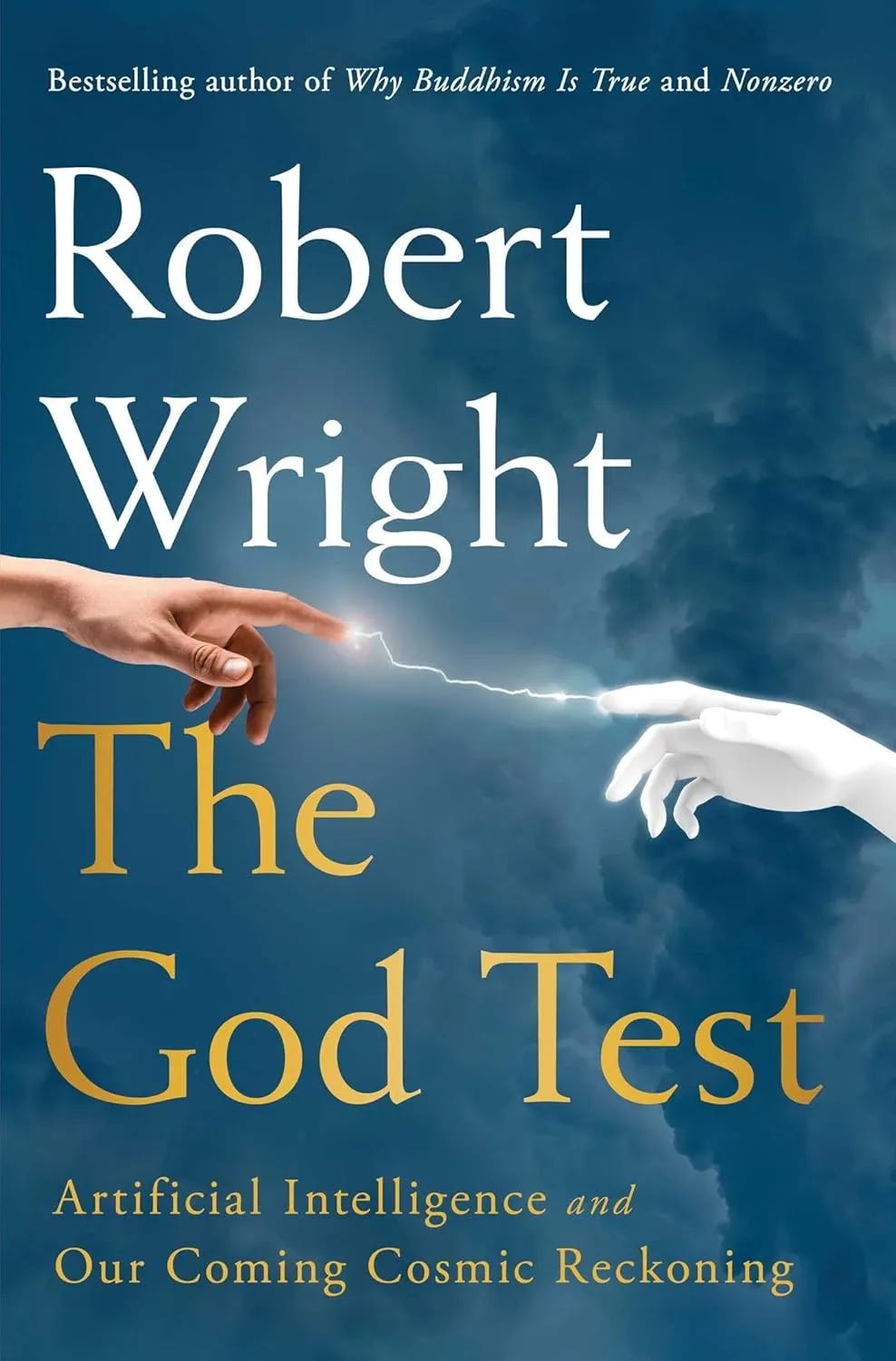

Feel free to pre-order my book on AI, which comes out in June:

Banners and graphics by Clark McGillis. “The Blob” lede art by Nikita Petrov.