It’s been an eventful week for Dario Amodei.

As of Monday, he was known as CEO of the increasingly famous AI company Anthropic, which makes the chatbot Claude and the wildly successful programming agent Claude Code. By Friday evening he had been catapulted from mere prominence to hero status in the eyes of many if not most members of some pretty big constituencies: anti-militarists, civil libertarians, the AI safety community, and (biggest of all) people who don’t like Donald Trump or Pete Hegseth.

But heroism, by definition, carries risks. After Amodei spent the week refusing to budge in a standoff with the Pentagon over the military uses of Anthropic’s AI, Trump went thermonuclear. The administration said not only that the entire federal government will end its use of Anthropic technology but that Anthropic would be designated a “supply-chain risk”—meaning that no Pentagon contractors can use Anthropic technology in business related to their contracts.

I won’t try to predict the course of this fluid story, aside from noting that, as usual with Trump, there’s a chance that one or both of these dramatic declarations won’t stay operative. (Some observers think the uber-draconian supply-chain-risk designation, never before applied to an American company, is on shaky legal ground.) But I would like to dispel some of the mythology surrounding this story—in particular, some myths that have crystallized as part of the heroization of Amodei.

Don’t get me wrong: I think Amodei deserves great credit for showing some spine (and, by prevailing Silicon Valley standards, a lot of spine) in the face of the Trump administration’s trademark thuggishness; Pentagon Chief Hegseth had threatened to play the supply-chain-risk card if Amodei didn’t back down, and Amodei didn’t back down. And I’m rooting for Anthropic to somehow, in some sense, prevail; if this government bullying of a big AI company becomes an enduringly intimidating precedent, that could come back to haunt us.

Still, if you, like me, worry about the American military’s use of AI, I encourage you not to take Amodei as some kind of paragon of virtue. I also encourage you not to take Anthropic’s policy on the military use of its technology as the kind of thing that can save us from the horrors that an AI-empowered American military could inflict on the world. Dario Amodei is a very impressive and apparently earnest person, but I don’t think he’s the hero we’re looking for.

The dispute between the Pentagon and Anthropic was about contractual language. The Pentagon wanted Anthropic to agree to “all lawful uses” of its technology, period, whereas Amodei wanted to add explicit guarantees that the technology wouldn’t be used in the mass surveillance of Americans or in fully autonomous weapons. This gave rise to…

Myth #1: that Amodei opposes the use of fully autonomous weapons. In an essay published last month, Amodei wrote that “fully autonomous weapons… have legitimate uses in defending democracy.” He just thinks the technology isn’t reliable yet and for the time being could endanger civilians and “America’s warfighters.” So, he wrote in that same essay, we shouldn’t “rush into” the use of fully autonomous weapons “without proper safeguards.” The Pentagon professes to agree. A Pentagon official said this week that its own regulations preclude leaving human judgment entirely out of the AI “kill chain” and so, given the contractual language about “lawful uses,” render the additional language Amodei wanted superfluous.

Apparently that’s not a crazy claim. In an interview with the Verge, Hamza Chaudhry, a national security expert at an AI safety NGO, described the relevant regulation this way: “DoD Directive 3000.09 requires that all autonomous weapon systems be designed so that commanders and operators be able to ‘exercise appropriate levels of human judgment over the use of force,’ and the Political Declaration on Military Use of AI launched by the US Government and endorsed by 50 states enshrines this principle.”

Myth #2: That Amodei is anti-militarist, or at least has a narrow view of the appropriate use of military force. Amodei’s geopolitical views overlap with those of neocons and many liberal interventionists: He sees the world as a global struggle between democracies and authoritarian autocracies—China in particular—and thinks the former should use superior weapons technology to dominate the latter. In a 2024 essay he said the US should lead a coalition of democracies that uses export controls and advanced AI to “achieve robust military superiority,” after which these democracies could “parlay their AI superiority into a durable advantage,” perhaps even achieving “an ‘eternal 1991’—a world where democracies have the upper hand.” Repressive autocracies like China wouldn’t get to share in our superior AI technology until they “give up competing with democracies.”

I think Amodei’s China hawkism is grounded in sincere concern about authoritarianism and the use of AI by authoritarian powers (even if the strategy that grows out of this concern—the strategy I just summarized—is completely bonkers for several reasons, including its conduciveness to getting American data centers bombed and Taiwan invaded and the world engulfed in a nuclear fireball). This concern about authoritarianism—and Amodei’s well-articulated concerns about the new forms mass surveillance could take via AI—are one reason I think the motivation behind his standoff with the Pentagon was earnest.

But I suspect that one byproduct of his concern about authoritarianism, in a geopolitical context, is a fairly broad comfort with the use of military force against authoritarian nations, going well beyond China. Certainly he seems to have been fine with the use of Anthropic technology in a recent attack on one authoritarian nation—Venezuela.

There’s been some misunderstanding about this, owing to reports that tension between Anthropic and the Pentagon started to heat up in January after an Anthropic employee asked a Palantir executive for details about how exactly Claude had been used in the Venezuela attack. Apparently word of this curiosity reached the Pentagon, and Hegseth got annoyed. Some people have inferred from this story that Anthropic objected to Claude’s use in an unprovoked attack that plainly violated international law and got lots of people killed. Sadly, no. Anthropic has made it clear that it wasn’t objecting to the fact that Claude had played some role in the invasion—just curious about what role.

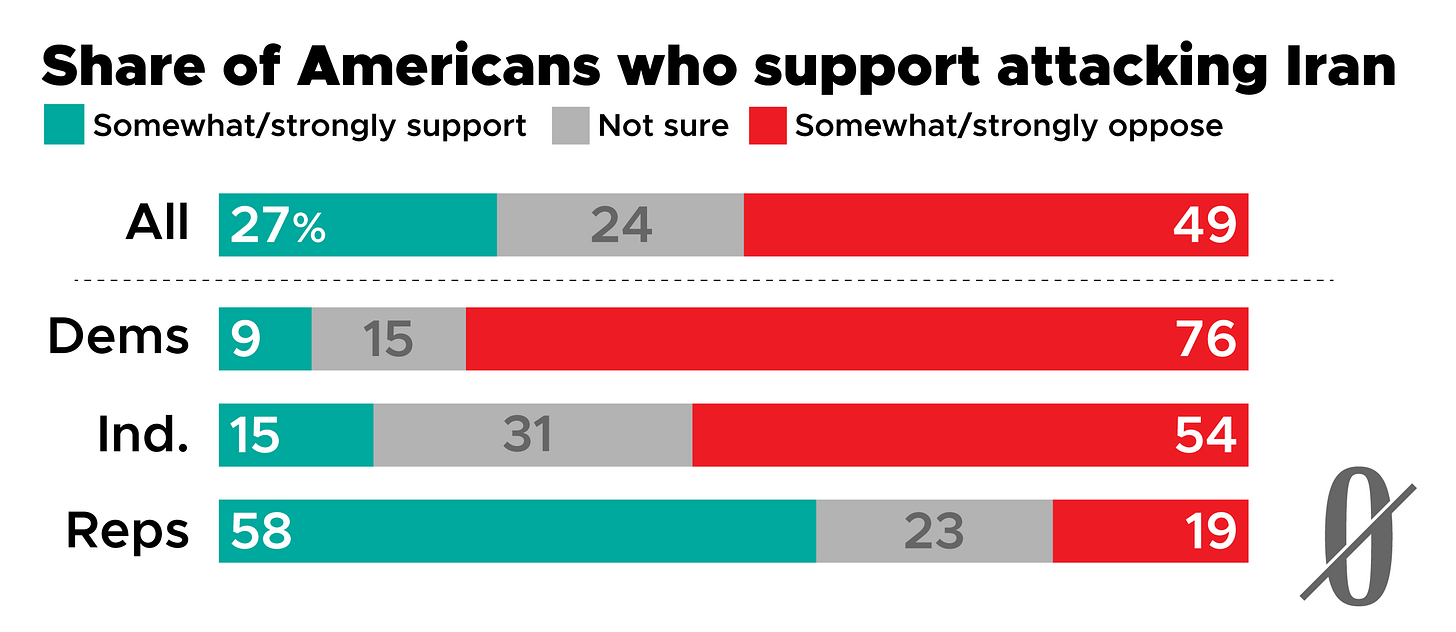

And Amodei hasn’t raised any concerns about the prospect that Claude could play a role in an equally illegal attack on another authoritarian nation, Iran—even though that could happen any day now, and the question of what military roles Anthropic does and doesn’t object to were the topic of the week.

This gets to my main reason for discouraging my fellow advocates of military restraint from putting much hope in the position Anthropic took this week. All of the big AI companies—Google, OpenAI, xAI, and Anthropic—decided some time ago to become defense contractors, even if that meant (as it did in OpenAI’s case) abandoning prior policies precluding the military use of their technology. So it would seem that the reckless belligerence that long ago became America’s hallmark (and that I documented in last week’s Earthling) will increasingly be AI-empowered, unless we either engineer a fundamental change in US policy or somehow shame lots of AI companies into suddenly denying their technology to the Pentagon. In any event, the kind of language Amodei wanted to insert in those Pentagon contracts, however laudable, isn’t going to do the trick.

In a sense it doesn’t bother me that Google and OpenAI and xAI agreed to sign contracts with the Pentagon authorizing it to make “all lawful uses” of their technology, period. I’d have been OK with that if they’d insisted that “lawful” be taken to mean “compliant with both national and international law.” But of course none of them have raised that question. And of course, for all the discussion of this week’s Anthropic-Pentagon standoff, nobody in the entire American foreign policy establishment, so far as I can tell, has raised it either.

That’s what needs to change. Dario Amodei, given his well-earned stature, could now begin that change—if he were so inclined.

PS If you’re disappointed that I only addressed two myths after that big mythbusting buildup, I bring good news: Below, after the graphs of the week, I tackle a third Dario Amodei myth: that he has unyielding commitment to AI safety.

Bonus Mythbusting!

Myth #3 that Dario Amodei has an unyielding commitment to AI safety

First, a little background:

In 2020, Amodei, a researcher who had played an important role in the development of the modern large language model, left OpenAI, and the following year he started Anthropic along with other OpenAI employees who shared his disenchantment with Sam Altman’s leadership. The mission of the new company, they said, was to take AI safety seriously.

Central to this pledge was Anthropic’s “Responsible Scaling Policy.” As Time described the RSP’s central pillar in 2024, Anthropic had “voluntarily constrained itself: pledging not to release AIs above certain capability levels until it can develop sufficiently robust safety measures. Amodei hopes this approach—known as the Responsible Scaling Policy—will pressure competitors to make similar commitments, and eventually inspire binding government regulations.” Anthropic became known for paying lots of attention to, for example, each new model’s potential to help bad actors build bioweapons (a potential that has grown).

But this week Anthropic disclosed to Time that it has abandoned the core of the Responsible Scaling Policy and will now release models without being confident of their safety. Anthropic’s chief scientist told Time: “We didn’t really feel, with the rapid advance of AI, that it made sense for us to make unilateral commitments… if competitors are blazing ahead.”

This disclosure had two earmarks of public relations mastery: (1) It came in an interview with second-tier media outlet Time, and the fact that it was a Time “exclusive” meant that first-tier outlets—the New York Times, the Wall Street Journal, the Washington Post—would play it less prominently than they otherwise would have. (2) The story was released just as publicity about the Anthropic-Pentagon fight was climaxing, thus further ensuring its burial.

Amodei’s stand against the Pentagon had its own kind of public relations value—including inside Anthropic. Anthropic got some of its founding energy (and money) via the “effective altruism” movement, and many employees signed on because they thought of Anthropic as more idealistic, and more committed to responsible AI development, than the other big AI companies. No doubt there was some discontent within the ranks over Anthropic’s abandoning (or, as Anthropic would put it, overhauling) its Responsible Scaling Policy, and no doubt pride over Amodei’s stand against the Pentagon helped counteract that discontent. I don’t think this was by any means his only motivation for standing firm against the Pentagon, but it couldn’t have hurt.

Banners and graphics by Clark McGillis.

If Hegseth can't get Claude to do mass surveillance or automate killbots for him, why doesn't he just try "talking to a librarian"?

The guy just took a significant hit and to stand up for civil liberty. He could have gone all in on the "If we don't do it [mass surveillance, fully autonomous weapons], they [China, some more evil AI company] will first" framing and just went with it, but he showed spine instead.

So many political movements, particularly on the left, fail because different fragments fail to meet each others' definition of moral purity, so they can't unite, and become irrelevant. I generally agree with the whole Nonzero framing of things - We face a lot of tragedy-of-the-commons-type problems and need to push in the direction of global cooperation and rule of law to solve them. But it's easy to profess moral purity when you have no power. The reality is, arms-race dynamics are real, and it sometimes IS true that if you don't do it, they will. You need to strike some balance between staying in power and following your principles, otherwise you join the ranks of people powerlessly shouting from distant bleachers. This time it seems Anthropic chose principles over power. Sure you may cynically say this was some kind of clever power-play on their part. But it's definitely the less-bad decision between the two. If you disagree, imagine a world with a Hegseth-controlled superintelligence.

I get that Anthropic is pushing the very "democracy vs autocracy" / "if we don't do it, they will" narrative that Nonzero is against. But when someone you generally disagree with takes an action you agree with - isn't it more productive to praise the agreeable action, rather then use it as an opportunity to slam that person for all the disagreeable things?